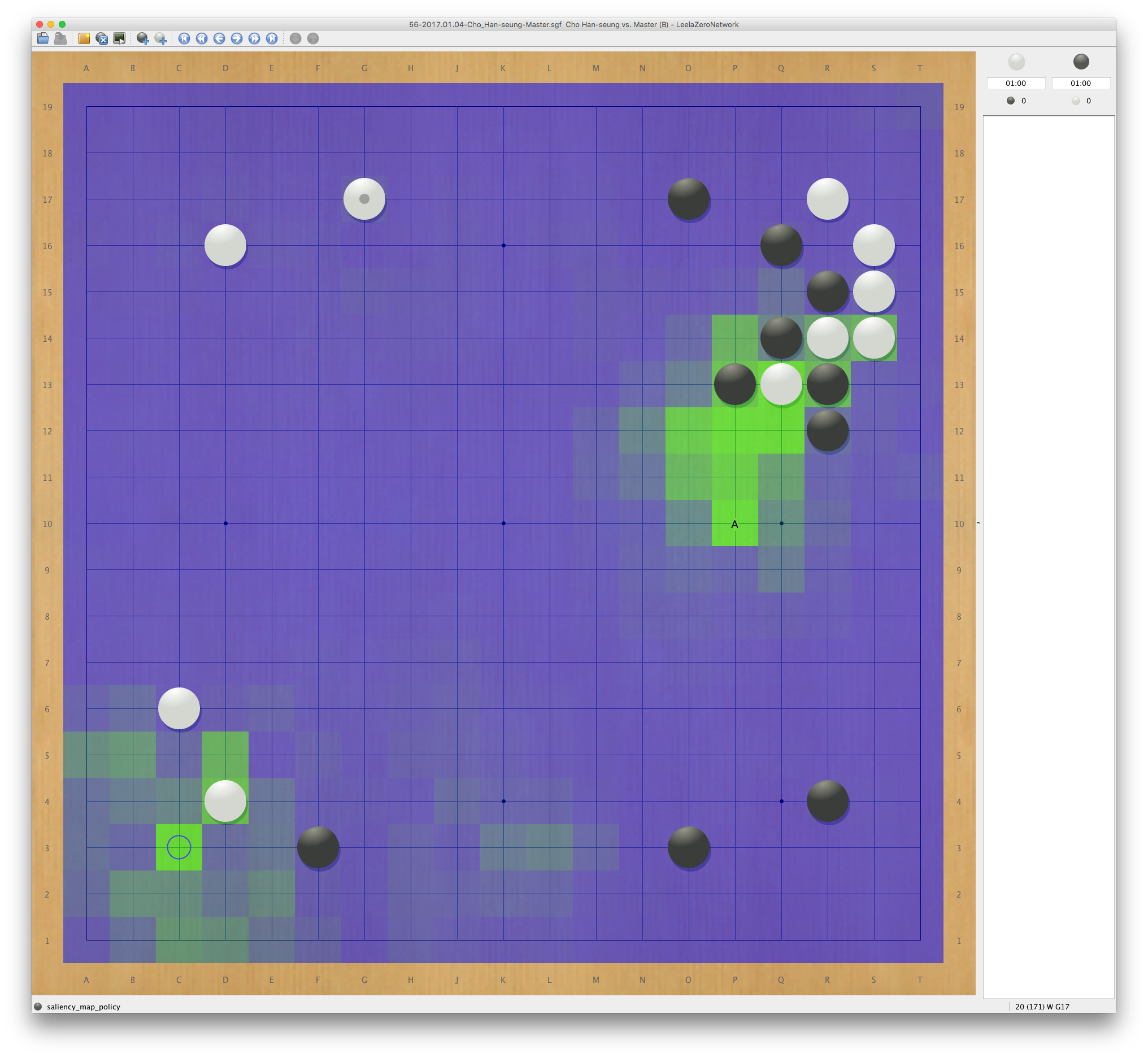

Various games

Rogue

We study many intresting properties in reinforcement learning in Rogue, including limited observation, exploration in maze, survival, and generalization.

- Kanagawa, Y. and T. Kaneko “Rogue-Gym: A New Challenge for Generalization in Reinforce- ment Learning,” in IEEE Conference on Games (CoG), pp. 1–8 (2019), DOI: 10.1109/CIG.2019.8848075

- https://github.com/kngwyu/rogue-gym

Catan

Catan is a multi-player imperfect-information game, involving negotiation among players.

- Gendre, Q. and T. Kaneko “Playing Catan with Cross-Dimensional Neural Network,” in ICONIP, pp. 580–592: Springer (2020a), DOI: 10.1007/978-3-030-63833-7_49

- https://github.com/Swynfel/rust-catan

Ceramic

Azul is a multi-player perfect-information game.